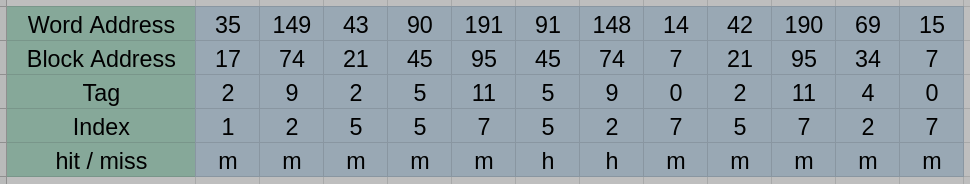

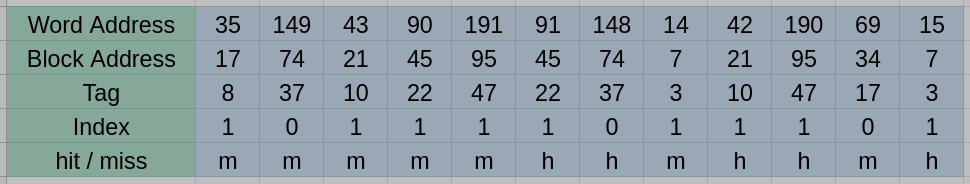

1. [20%] Caches are important to providing a high-performance memory hierarchy to processors. Below is a list of 32-bit memory address references, given as word addresses.

$$35, 149, 43, 90, 191, 91, 148, 14, 42, 190, 15$$

(1) For each of these references, identify the tag and index given a direct-mapped L1 cache with two-word blocks and a total size of 16 words. Also list if each reference is a hit or a miss, assuming the cache is initially empty.

(2) For each of these references, identify the tag and the index given a four-way set-associative L1 cache with two-word blocks and a total size of 16 words. Also list if each reference is a hit or a miss, assuming the cache is initially empty and LRU replacement is used.

(3) Given the above memory address reference, what is the average memory access time (AMAT) if the hit time of the directed-mapped L1 is one cycle and main memory access take 200 cycles. What is the AMAT of the four-way set-associative cache if the hit time is 2 cycles and main memory access also takes 200 cycles?

參考解答:

(1)

(2)

(3) (1) $\text{AMAT} = 167.67 \text{ cycles}$ (2) $\text{AMAT} = 118.67 \text{ cycles}$

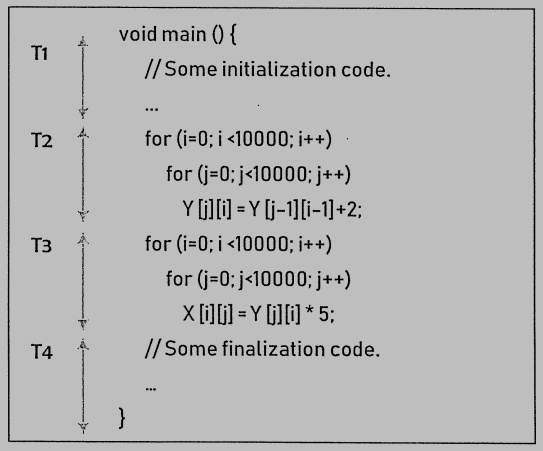

2. [15%] Consider a sequential C program as illustrated in the figure below. The program is divided into four code segments, T1, T2, T3, and T4. We profile the program execution time on the single-core MIPS processor and the execution time for the code segments is: 2ms for T1, 8ms for T2, 16ms for T3, and 2ms for T4. Moreover, two different processor variants are available: one is the quad-core MIPS processor (with the same instruction set architecture as the single-core processor), and the other one is the single-core MIPS processor with an 8-wide SIMD engine. Assume we have converted the program into parallel versions suitable for the two processors, respectively. Please answer the following questions.

(1) Parallelize the program suitable for the multicore processor and calculate the speedup of the parallelized program.

(2) Parallelize the program suitable for the processor with the SIMD engine and calculate the speedup of the parallelized program.

(3) Based on the speedups calculated in (1) and (2), please determine which processor is faster? Why?

參考解答:

(1) $1.75$

(2) For T2 and T3, every 8 array data inside the loop can be processed parallelly by the SIMD engine, so the speedup is $\dfrac{2 + 8 + 16 + 2}{2 + \frac{8}{8} + \frac{16}{8} + 2} = 4.$

(3) 8-wide SIMD engine is faster

3. [15%] Determine whether each of the following statements is True (T) or False (F), and explain your answer.

(1) Graphics applications deliver higher performance because the DRAM chips on GPU cards help reduce memory latency.

(2) A shared memory can be created for computer clusters to exchange data.

(3) A GPU is always faster than a CPU at the cost that the GPU has higher power consumption.

(4) Given the two-level caches, the purpose of the first-level cache is more about miss rate, and the purpose of the second-level cache is more about hit time.

(5) Associativity of the addition operation holds for both integer and floating-point numbers.

參考解答:(1) F (2) F (3) F (4) F (5) F

4. [20%] Consider a computer with a 64-entry TLB in its main processor. The computer has a hard disk spinning at 5400 RPM and holding 40 sectors per track with a sector size of 512 bytes. In addition, the access latency time for the hard drive is 10 milliseconds, and the seek time is 30 milliseconds.

(1) Calculate TLB reach when the computer supports memory page sizes of 1 KB and 4 KB, respectively.

(2) Compute the data transfer rate of the hard disk in KB/s.

(3) Estimate the I/O times when page faults occur; give your I/O times in milliseconds for handling the page fault of a 1-KB page and a 4-KB page, respectively.

(4) We assume that the data transfer time of the hard disk is proportional to the page size. What are the I/O times to transfer the same amount of data (i.e., 4KB data) with 1-KB and 4-KB pages, respectively? In such a case, if we want to minimize the I/O time on the computer, which page size (1 KB or 4KB) is desired?

參考解答:

(1) 64KB, 256KB.

(2) 1800KB/s

(3) 40.556, 42.224

(4) 4KB page size is desired

5. [30%] Considering the real-time systems, which are waiting for events in runtime occur, when an event occurs, the system must act and respond to the event as fast as possible. On such systems, event latency is used to denote the amount of the elapsed time from the event occurring time to the time where it is serviced.

(1) What are the two types of latencies that affect the event latency of such systems?

(2) Which process scheduling scheme (i.e., preemption or non-preemption-based scheme) is preferred to provide less latency?

(3) Suppose that the following processes arrive for execution upon at the arrival time. Each process will run the amount of time as listed below. Based on your answer given in (2), choose FCFS or RR (quantum = 20 milliseconds) to compute the average waiting time and the average turnaround time for the processes.

| Process | Arrival Time | Burst Time |

| P1 | 0 | 53 |

| P2 | 0 | 17 |

| P3 | 0 | 68 |

| P4 | 0 | 24 |

(4) If FCFS is used to schedule the processes given in (3) on one processor, how many possible different schedules are there?

參考解答:

(1) Dispatch latency and Interrupt latency

(2) Preemption-based

(3) Avg. waiting time: 73, Avg. turnaround time: 113.5

(4) $4! = 24$

試題(pdf):連結

有任何問題,或想要完整解釋的,歡迎在下方留言唷!

yummy

想請問第四題的第四小題答案是怎麼來的

mt

傳送4KB data的情況下:

1KB page size需40.556 * 4 = 162.224 I/O time

4KB page size需42.224 * 1 = 42.224 I/O time

所以選擇4KB的page size~

boting

想請問第五題的第三小題的答案是如何得到的

mt

利用RR先把gantt chart畫出來:

1|2|3|4|1|3|4|1|3

Avg. waiting time就是把每個processes的waiting time加起來除以4:$\dfrac{81+20+94+97}{4}=\dfrac{292}{4}=73$

Avg. turnaround time就是把每個processes的結束時間加起來除以4:$\dfrac{134+37+162+121}{4}=113.5$

YH

想請問1-2的 word address 42 為何是hit?

我感覺他會被替換下去所以會是miss…

mt

只有word address 35被換出去喔~前面word address 43用過還留在cache裡面。

ziyn

想請問第一題的第一小題 為什麼從42開始都是miss

mt

到42的時候cache裡面是tag: 5 index: 5的資料所以miss,到190的時候cache裡面是tag: 0 index: 7的資料所以miss,到69的時候cache裡面是tag: 9 index: 2的資料所以miss,到15的時候cache裡面是tag: 11 index: 7的資料所以miss。

loser

請問第四大題的第三小題為什麼不需要考慮 rotation time

mt

因為題目已經給了latency time就不用考慮rotation time了,直接用latency time就可以了。

Shiky

原來是這樣,學到了